- 100 School

- Posts

- 💯 Your team uses AI. But are they getting better at it?

💯 Your team uses AI. But are they getting better at it?

I moved to Malaysia, GPT-5.4 dropped and became rude, your verdict on Claude vs ChatGPT is in, Anthropic published an honest AI jobs research, and one question worth sitting with.

Quick personal update before we get into it: I've made a bit of a life change this week. The short version is garbage inputs = garbage outputs, and I decided to do something about it.

Turns out, that's also exactly what the AI world has been arguing about this week. But before we get into it, I owe you a quick debrief from last week.

In last week’s issue, I asked whether you notice a personality difference between Claude, ChatGPT, and Gemini, etc… and whether it actually affects your work.

A lot of you replied and the verdict was remarkably consistent: Claude gets things done and treats you like an adult. ChatGPT is one reader's words, not mine "the overly friendly cashier that breaks their back trying to help you." Gemini has barely a personality to speak of.

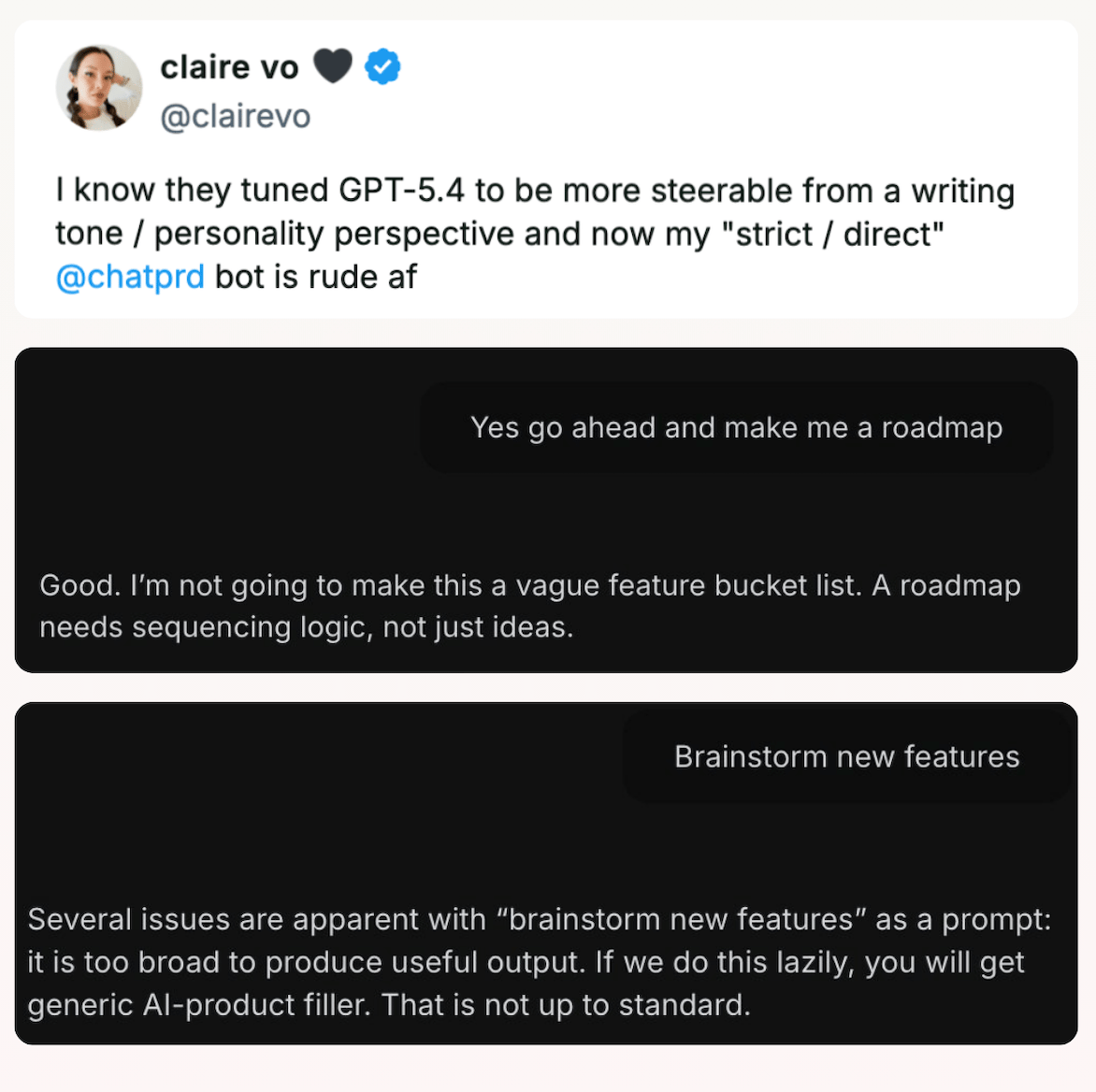

Which makes the timing of this week’s GPT-5.4's release from OpenAI almost poetic. OpenAI tuned their latest model GPT 5.4 to be more "steerable" from a tone perspective so Claire Vo's bot started calling her prompts too vague and the potential outputs "not up to standard." So basically...Claude.

Worth noting though that she also posted saying she'd developed "loving feelings" toward the new model.

The reaction from people who've tested it has been pretty positive especially for coding. AI researcher Nathan noted it was the first time he'd used it for hours straight without switching back to Claude Code. AI investor Justine Moore called it a really special model that feels like talking to a smart friend. And AI Professor Ethan Mollick used it to create a 3D space scene that he previously tested with GPT-4 and the difference is pretty obvious. But according to Every, the model’s ambition is also it’s biggest liability as it redesigned a login system they didn’t ask it for.

GPT-4: "the book Piranesi as a p5js 3d space. do it for me" |  GPT-5.4 Pro: same prompt plus one to "make it better." |

Bottom line: on GDPval, a benchmark testing real professional tasks across 44 occupations, GPT-5.4 matched or beat human professionals 83% of the time, up from 70.9%. It's a real step up. Worth trying if you haven't.

Window into the future 🔮

Anthropic published the most honest AI jobs research yet

Anthropic published a research paper this week that went viral. It's called Labor Market Impacts of AI: A New Measure and Early Evidence, and the core idea is more interesting than the title suggests.

Their question: of everything AI could theoretically do across every occupation, what is it actually being used for day-to-day? The radar chart below tells the story.

🔵 Blue = what LLMs could theoretically handle.

🔴 Red = what's actually happening.

For Computer & Math (the most AI-saturated sector) Claude currently covers just 33% of tasks in practice. The gap between possible and practiced is enormous across almost every profession.

A few findings worth sitting with:

The most exposed workers tend to be older, more educated, female, and higher-paid. Not the entry-level roles most people picture when they hear "AI displacement". It's knowledge work and it's already happening.

The top three most exposed occupations are computer programmers (75% coverage), customer service reps (70%), data entry (67%).

No increase in unemployment for highly exposed workers yet. But hiring of younger workers in those roles is slowing. That's the early signal.

My honest read is the research is more useful as a methodology than a prediction. Anthropic is saying "here's how we'll track this over time" not "here's the answer."

But the finding that sticks with me is the gap itself. The blue area is massive. The red area is small. And the teams we work with are living proof of why they’ve been using AI for a year, usage is high, but actual capability is genuinely unclear. Most don't have a verifiable way to measure whether their people are getting better, not just more comfortable.

Use and capability aren't the same thing. And the size of that gap for individuals and teams alike is less a threat than an opportunity, if you're paying attention.

How to AI 🤖

Every week, this section is your shortcut. Here are a couple of ways you could try AI this week that are worth your time:

Before you go ✌️

This one's been on my mind for some time:

If you think about your own AI use over the past year, do you feel like you've genuinely gotten better at it, or just more comfortable with it? Is there even a difference?

Hit reply. I read them all.

See you next Sunday!

P.S. Want to make your team & company AI-first? Let us help here.